The transition to AI-native enterprise operations relies on structural integrity rather than model intelligence. While a Large Language Model provides the reasoning capability, a rigorous AI system design provides the safety, auditability, and predictability required for production-grade software. Success in 2026 is defined by the shift from managing "black box" outputs to engineering reliable environments that constrain probabilistic models within enforceable operational boundaries.

The discourse often gravitates toward the cinematic - the fear of a "super-intelligence" outsmarting its creators or a "black box" making inscrutable decisions. While these are valid philosophical provocations, the immediate, existential risk for the enterprise is more grounded. The danger of deploying "unbounded" systems. When a system can reason but cannot be audited, or when it can act but cannot be constrained, it ceases to be an asset and becomes a liability.

The path to safety is not found in slowing down, but in engineering better. The "intelligence" of the model is a commodity - the "integrity" of the system is the breakthrough. By treating AI not as a magic oracle, but as a component of rigorous AI system design, we move from a posture of hope to a posture of control. Reliability and trust are not inherent traits of a Large Language Model, however, they need to be made into emergent properties of a well-architected environment.

The Infrastructure Imperative in AI System Design

While a clever prompt can create an impressive demonstration, it cannot sustain a mission-critical operation. Building systems that are durable, observable and accountable requires a fundamental move away from viewing AI as a "black box" and toward treating it as professional-grade infrastructure. To bridge the gap between a prototype and a production-grade system, organizations must adopt an AI system design grounded in architectural rigor rather than model-centric hype.

Most organizations encounter AI as a demonstration of capability. In this stage, success is defined by the perceived intelligence of a model’s output - how well it summarizes a document or how fluently it answers a question. However, a capability demonstration only answers whether a model can do something. Production infrastructure must answer whether the system can perform that task reliably, repeatedly and safely under real operational conditions.

The transition to true infrastructure begins when the system is exposed to scale and real-world variation. At this point, costs, latency and edge cases matter more than the "cleverness" of a single response.

The Architecture of Bounded Behaviour

The defining property of infrastructure is the existence of well-defined boundaries. Due to the nature of AI systems being probabilistic - meaning their behaviour is statistical rather than rule-based - they disrupt the "hidden contract" of traditional software where identical inputs always yield identical outputs. To manage this inherent variability, an AI system design must be built with explicit boundaries regarding information, behaviour, operations and actions. These boundaries do not eliminate uncertainty, but they contain it within predictable, manageable limits.

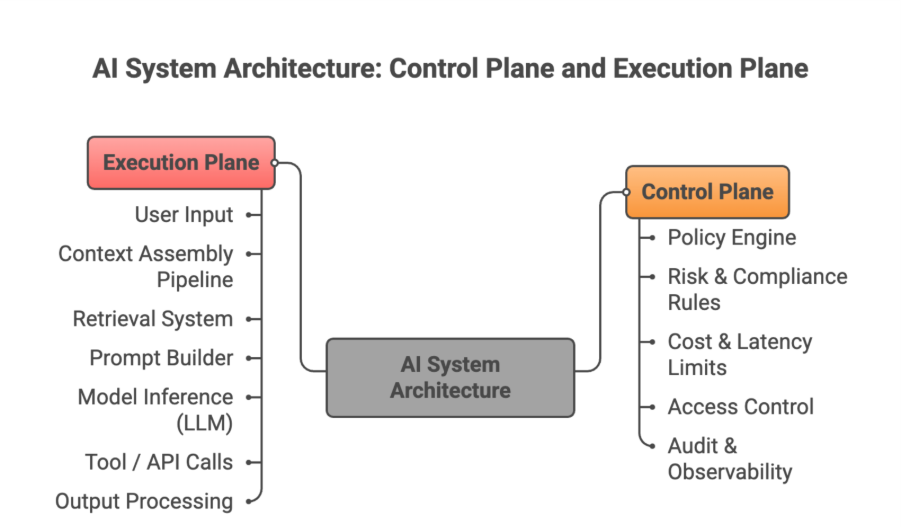

A common mistake in AI deployment is treating a model as just another microservice. Traditional services expose deterministic contracts. A model service, however, exposes a probability distribution. Durable infrastructure survives by separating Authority from Mechanism.

The Control Plane serves as the ‘authority’, where organizational intent, risk tolerance and compliance rules are encoded as enforceable artifacts. This must exist outside the model's reasoning process.

The Execution Plane serves as the ‘mechanism’, the dynamic environment where context is assembled and models are invoked. When these layers are intertwined, every model upgrade or prompt tweak becomes a risky renegotiation of authority within the AI system design.

Context as Modern Pipeline for AI System Design

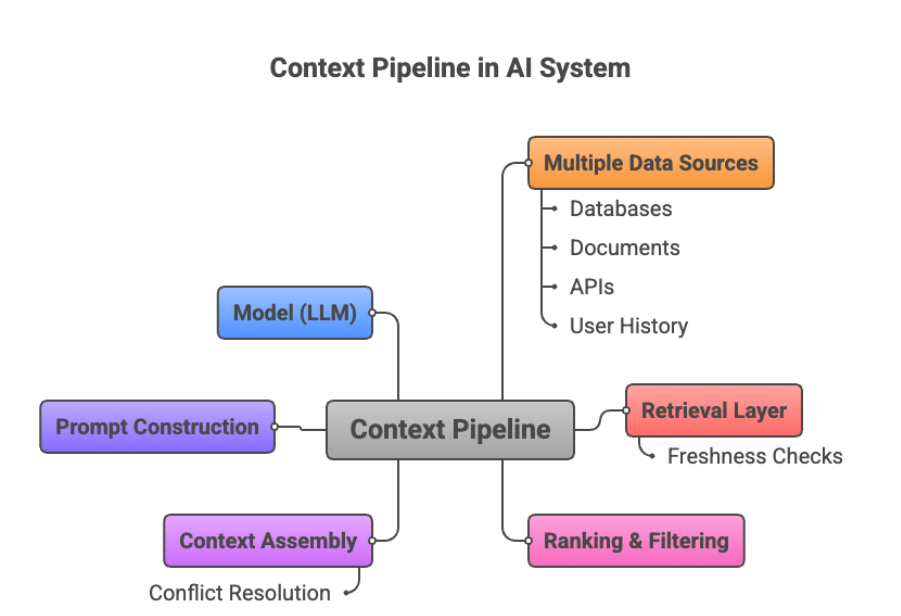

In a production environment, "hallucination" is rarely a creative aberration of the model. It is a signal that system boundaries were incomplete. If a model produces plausible but incorrect content, it is often because the system failed to define what information was authoritative or when uncertainty should have triggered a refusal.

Therefore, context must be engineered as a first-class artifact within the AI system design, with the same rigor applied to APIs or database schemas. This requires formalizing context contracts, ensuring data freshness and defining deterministic precedence for conflicting information.

Traditional reliability is built on sameness. In AI, reliability is built on the consistency of boundary adherence. A reliable AI system design is one that exhibits predictable refusal behavior, stable adherence to cost ceilings and consistent escalation patterns when uncertainty is high. By encoding acceptability criteria into the architecture, we move away from blaming the model for unexpected outcomes and toward a model of structural accountability. When constraints are enforceable in code rather than just documented in manuals, trust becomes an emergent property of the system's design.

At GoML, we recognize that the gap between a successful pilot and a resilient production system is paved with engineering discipline. While the industry is flooded with demonstrations of what is possible, our focus remains steadfast on what is sustainable and scalable.

This commitment to reliability is codified in our AI Matic framework. AI Matic is a rigorous delivery discipline designed to move generative AI out of the sandbox and into the core of enterprise operations. By automating the enforcement of boundaries - informational, behavioral and operational - AI Matic ensures that every system we deploy is accountable by design and durable under stress.

Our 120+ successful production deployments are a testament to our excellence in applied AI engineering. We specialize in the complex "last mile" of deployment.

To explore the full engineering posture required to take accountable and sustainable AI systems from pilot to production, read our full whitepaper on AI system design.

.jpg)

.jpg)